Stack Exchange Network Status

-

Outage Postmortem: March 31, 2015

On 2015-03-31 at 10:00:00 UTC, it was Midnight, April 1st in Samoa (GMT+14). At 10:23:14 UTC, we enabled StackEgg for all logged-in users across all sites the Stack Exchange network. This resulted in a heavily increased load to our primary load balancers as a result of the additional AJAX hits to get game state. In the initial deploy, there was an additional request per page load. Though this was asynchronous and didn’t affect page load time for clients, it caused a significant concurrent session increase on our load balancers. This resulted in a 6 minute public outage on Stack Overflow.

Three main factors went into this concurrent session build-up:

- The additional HTTP requests themselves were instantaneous and transient, meaning open/closed and done. The amount of requests was non-trivial, peaking out at 3,444 requests/sec at peak before reduction measures took effect. This was additional load, the combined traffic peaked at 6,608 requests/sec.

- In order to increase performance in the rare (before today) cases we load more than 1 resource directly from our load balancers, we employ HTTP Keep-Alive. This instructs the browser to hold open the connection. In our case, we had this tuned to 15 seconds. With 3,444 requests/second and a 15s keep-alive, we were attempting to sustain an additional 51,660 concurrent sessions at the peak of the issue.

- In order to maintain sanity and prevent CPU overload, we cap our concurrent sessions at 40,000 in HAProxy on the frontend affected by the issue.

Once we reached the 40,000 session capacity on the frontend, subsequent sessions are queued and waiting to finish their TCP connect. In the short-term where a batch of requests finishing results in going under the threshold, sporadic load durations result - depending on where in the oscillation cycle you hit. If left unaddressed long-term, concurrent sessions would stay above the bar and result in TCP connection timeouts for users.

Upon identifying the bottleneck, we raised the concurrent sessions limit to relieve immediate pressure and began working to reduce the number of HTTP requests from StackEgg. Raising our limit on the frontend was a simple HAProxy change via Puppet. Reducing StackEgg requests had 2 pieces:

- Eliminating the initial AJAX request by embedding the needed data directly in the page

- Reducing the occurrences we called for fresh data and increase dependence on websockets to feed game data.

These simultaneous adjustments greatly reduced load on the load balancers but still kept us around 60,000 sessions at times of peak traffic. However, this was enough to address the issue on 2015-03-31 (UTC). The load from the StackEgg data route is now stable at 1,000 - 1,400 req/s.

On the morning of 2015-04-01 we again saw a traffic increase approaching approximately 60,000 simultaneous sessions again. The decision was made to temporarily lower HTTP Keep-Alive for the duration of StackEgg from 15s to 5s. This lowers the initial concurrent session load we began with as well as StackEgg - possibly with consequences of reduced performance (which we will be measuring and testing). This dropped our sessions from about 59,500 down to ~19,000 where we remain stable.

Timeline of events (UTC)

Timeline for March 31st:

- 10:23:14 StackEgg enabled on all sites for everyone

- 11:32:00 General Site slowness is observed

- 11:55:00 SREs identify load balancer traffic bouncing

- 12:15:00 Traffic to StackEgg: 7,752,882 requests

- 12:53:23 Related Bosun frontend alerts identified

- 12:56:28 HAProxy frontend session limits upped

- 13:19:50 Built changes to further reduce load

- 13:41:15 Pingdom reports Stack Overflow offline

- 13:42:19 StackEgg disabled on Stack Overflow externally due to load

- 13:44:30 Flipping StackEgg into Employee overloads the web tier

- 13:46:13 StackEgg is turned fully off manually in database

- 13:47:00 Pingdom reports Stack Overflow online

- 14:07:31 More code optimizations built-out

- 14:30:25 Old JavaScript route is hard-killed in HAProxy

- 14:38:54 StackEgg data route shifted to differentiate traffic

- 14:50:32 StackEgg rollout to Stack Overflow begins

- 14:56:29 StackEgg re-enabled on all sites

- 15:02:39 All systems green - high but stable request load

Timeline for April 1st:

- 10:19:00 Sessions at approximately 60,000 concurrent

- 10:30:00 Decision: lower HTTP Keep-Alive from 15 to 5seconds

- 10:31:47 Swapped load balancers to prep for changes

- 10:37:22 Keep-Alive duration change applied via HAProxy reload

- 10:38:31 Concurrent sessions drop to ~28,000

- 11:23:13 Concurrent sessions down to 23,047

- 15:37:38 Concurrent sessions down to 19,689

For a graphical view of some of the traffic issues…

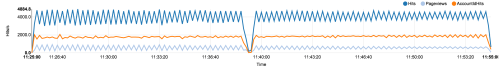

Here is what traffic bouncing against the 40,000 limit appeared as:

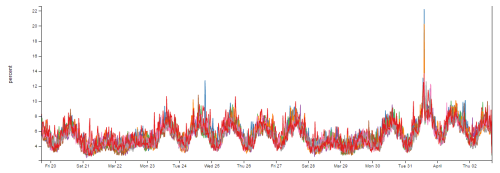

A view of sessions for the previous 2 weeks:

Web tier CPU over the same 2 weeks:

We will be keeping an eye on performance in our data center as well as measure the effect of Keep-Alive duration via client timings now that StackEgg has passed. As a matter of practice, we have an open internal discussion about any user-facing outages and will be doing the same here.